However, after sufficient time has passed, the system reaches a uniform color, a state much easier to describe and explain.īoltzmann formulated a simple relationship between entropy and the number of possible microstates of a system, which is denoted by the symbol Ω. The dye diffuses in a complicated manner, which is difficult to precisely predict. The more disordered a system is, the higher (the more positive) the value of. However, this description is relatively simple only when the system is in a state of equilibrium.Įquilibrium may be illustrated with a simple example of a drop of food coloring falling into a glass of water. Entropy can be defined as the randomness or dispersal of energy of a system. The book’s subtitle is: What we know and what we do not know.

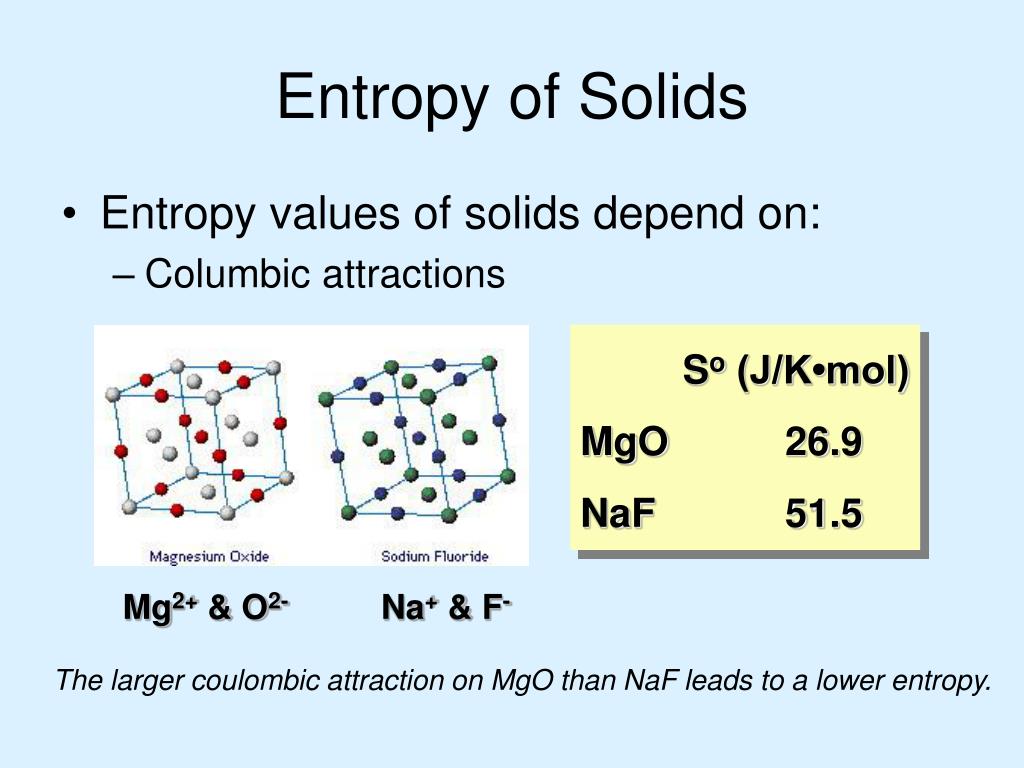

Therefore, the system can be described as a whole by only a few macroscopic parameters, called the thermodynamic variables: the total energy E, volume V, pressure P, temperature T, and so forth. In 2015, I wrote a book with the same title as this article. The ensemble of microstates comprises a statistical distribution of probability for each microstate, and the group of most probable configurations accounts for the macroscopic state. The large number of particles of the gas provides an infinite number of possible microstates for the sample, but collectively they exhibit a well-defined average of configuration, which is exhibited as the macrostate of the system, to which each individual microstate contribution is negligibly small. The second law of thermodynamics is best expressed in terms of a change in the thermodynamic variable. The collisions with the walls produce the macroscopic pressure of the gas, which illustrates the connection between microscopic and macroscopic phenomena.Ī microstate of the system is a description of the positions and momenta of all its particles. Calculate the change of entropy for some simple processes. At a microscopic level, the gas consists of a vast number of freely moving atoms or molecules, which randomly collide with one another and with the walls of the container. Also, scientists have concluded that in a spontaneous process the entropy of process must increase. Moreover, the entropy of solid (particle are closely packed) is more in comparison to the gas (particles are free to move). The easily measurable parameters volume, pressure, and temperature of the gas describe its macroscopic condition ( state). Entropy is a thermodynamic function that we use to measure uncertainty or disorder of a system. A useful illustration is the example of a sample of gas contained in a container. Ludwig Boltzmann defined entropy as a measure of the number of possible microscopic states ( microstates) of a system in thermodynamic equilibrium, consistent with its macroscopic thermodynamic properties, which constitute the macrostate of the system. Sources: NIST SP 800-133 Rev.Main article: Boltzmann's entropy formula Sources: NIST SP 800-63-3 A measure of the disorder, randomness, or variability in a closed system see SP 800-90B. A value having n bits of entropy has the same degree of uncertainty as a uniformly distributed n-bit random value. 1 NIST SP 800-90B A measure of the amount of uncertainty an attacker faces to determine the value of a secret. Min-entropy is the measure used in this Recommendation. However, the explicit association of the entropy with time’s arrow arises from Eddington. Sources: NIST SP 800-63-3 A measure of the disorder, randomness or variability in a closed system. The idea that entropy is associated with the arrow of time has its roots in Clausius’s statement on the Second Law: Entropy of the Universe always increases. The bending strain is elaborated based on the geometrical picture shown in Figure 12.11, as. Due to mechanical and thermal (entropic) effect, biomembranes undergo local out-of-plane fluctuations, which the present homogenization method aims to quantify at the level of the effective continuum. A value havingnbits of entropy has the same degree of uncertainty as a uniformly distributedn-bit random value. 12.2.5 Nonlinear out-of-plane bending of biomembranes. 1a A measure of the amount of uncertainty an attacker faces to determine the value of a secret. The entropy of uncertainty of a random variable X with probabilities pi, …, pn is defined to be H(X)=-∑_(i=1)^n 〖p_i log〖 p〗_i 〗 Sources: NIST SP 800-22 Rev. A measure of the disorder or randomness in a closed system.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed